21 = 3 x 7

The Long Road from 21 to Cryptographically Relevant Quantum Computers

Do you remember elementary school math?

Factoring numbers into prime factors?

That’s how we measure today one of the core capabilities of quantum computers.

In 2001, IBM managed to factor the number 15 using a quantum computer.

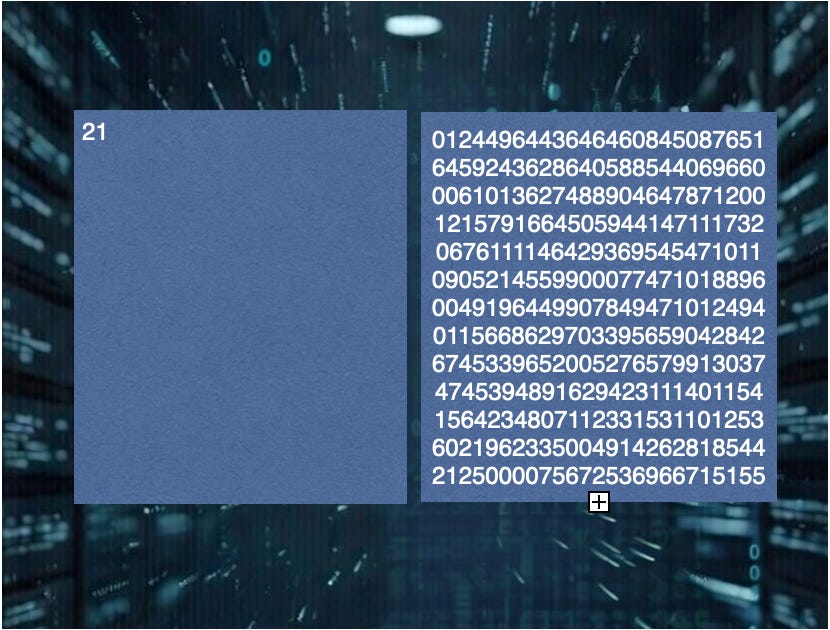

Eleven years later, in 2012, researchers in the UK factored 21, which remains the largest number ever factored by a genuine implementation of Shor’s algorithm on quantum infrastructure.

Since then, setting aside hybrid shortcuts, there has been no further progress.

We have now been waiting 14 years for the factorization of 35, which would already represent a meaningful milestone given the exponential growth in energy requirements, system stability, and error correction.

To even begin talking seriously about threatening modern cryptography, we would need to solve a quantum problem equivalent to factoring numbers on the order of 1024+ bits — that is, integers with roughly 310 decimal digits.

Yes.

Between 21 and 1024+ bits stands a mountain of hard, unresolved physics.

And that number 21? We’re quite used to it in Bitcoin.